Java Virtual Threads (Project Loom) in Production: Migration Guide, Pitfalls, and Benchmarks

Java 21 made virtual threads a production-ready feature. For most IO-bound services, they eliminate the need for reactive programming while delivering the throughput of non-blocking I/O with the simplicity of synchronous code.

Table of Contents

- Introduction

- Problem Statement: The Thread-Per-Request Bottleneck

- How Virtual Threads Work: The JVM Scheduler

- Spring Boot Integration: Enabling Virtual Threads

- Thread Pinning: The Most Important Pitfall

- JDBC and Connection Pool Considerations

- Structured Concurrency: Composing Virtual Thread Tasks

- Performance Benchmarks: Real-World Numbers

- Pros and Cons

- Common Mistakes

- Conclusion

Introduction

For two decades, Java's concurrency model was dominated by OS threads. Each thread consumes roughly 0.5–2 MB of stack memory and requires a kernel context switch when blocked. This made high-concurrency servers expensive to run: supporting 10,000 simultaneous requests meant either carefully sized thread pools, reactive programming frameworks like Project Reactor or RxJava, or accepting underutilized hardware.

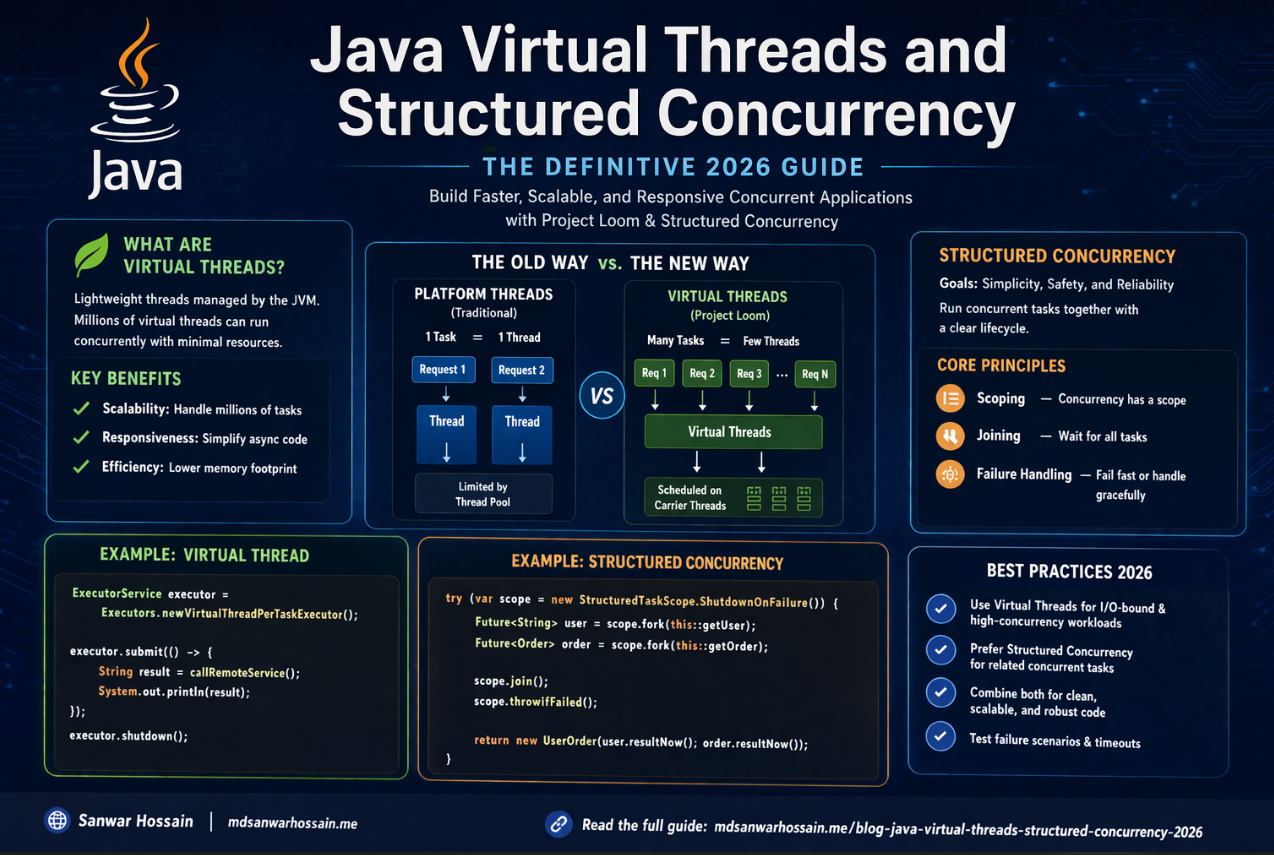

Project Loom, delivered as a standard feature in Java 21, introduces virtual threads — lightweight threads scheduled by the JVM rather than the OS. A single OS thread (called a carrier thread) can multiplex thousands of virtual threads. When a virtual thread blocks on I/O, the carrier thread parks the virtual thread and picks up another one. This is transparent to the application code: you still write straightforward synchronous blocking code, but the JVM handles the scheduling overhead. The result is massive throughput improvement for IO-bound workloads with no change to application logic.

Problem Statement: The Thread-Per-Request Bottleneck

The classic Spring MVC model assigns one OS thread per request. When that thread makes a JDBC call or an HTTP call to a downstream service, it blocks until the response arrives. For latency-sensitive services with many concurrent users, thread pool exhaustion becomes the bottleneck. You tune Tomcat's maxThreads upward, increase heap to support more stack space, and eventually hit diminishing returns where context-switch overhead dominates CPU usage.

Reactive programming solves this by rewriting business logic in a non-blocking, callback-oriented style. It works — systems like Netflix's API gateway handle millions of requests per second with reactive stacks — but the cognitive overhead of reactive pipelines, especially for debugging and exception handling, is significant. Virtual threads offer an alternative path: you get near-identical throughput benefits while writing code that reads like standard synchronous Java.

How Virtual Threads Work: The JVM Scheduler

Virtual threads are instances of java.lang.Thread but are not backed by an OS thread one-to-one. The JVM maintains a pool of carrier threads (typically sized to available CPU cores) and mounts virtual threads onto them for execution. When a virtual thread makes a blocking call — JDBC query, HTTP request, file read — the JVM unmounts it from its carrier thread, storing its stack on the heap. The carrier thread is free to execute other virtual threads. When the blocking call completes, the virtual thread is rescheduled for mounting.

The key insight is that virtual thread stacks live on the heap, not in fixed OS stack memory. This means you can create millions of virtual threads without running out of memory in the way you would with OS threads. Creating a virtual thread costs roughly a few hundred bytes initially, growing only as the call stack grows.

Spring Boot Integration: Enabling Virtual Threads

Spring Boot 3.2+ provides first-class support for virtual threads. Enabling them requires a single configuration property:

# application.properties

spring.threads.virtual.enabled=trueWhen this property is set, Spring Boot configures Tomcat (or Jetty/Undertow) to use a virtual thread executor instead of the traditional thread pool. Every incoming request is handled on a new virtual thread. The application code does not need to change. JDBC calls, RestTemplate calls, and Feign clients all work transparently.

For programmatic usage outside Spring Boot, you create virtual threads using the standard API:

// Java 21: Creating a virtual thread

Thread vt = Thread.ofVirtual().start(() -> {

// This blocking call yields the carrier thread

String result = fetchFromDatabase();

process(result);

});

// Using ExecutorService with virtual threads

try (ExecutorService executor = Executors.newVirtualThreadPerTaskExecutor()) {

executor.submit(() -> handleRequest(request));

}Thread Pinning: The Most Important Pitfall

Virtual threads do not always yield their carrier thread when blocking. Two specific cases cause pinning — where the virtual thread holds its carrier thread even while blocked, eliminating the throughput benefit:

1. Synchronized blocks: Code inside synchronized methods or blocks pins the virtual thread to its carrier. If your application uses legacy libraries with heavy synchronized usage, those sections will not benefit from virtual thread multiplexing.

2. Native methods: Calls into native code via JNI can also cause pinning.

To detect pinning, run your application with the JVM flag -Djdk.tracePinnedThreads=full. The JVM will print a stack trace whenever a virtual thread pins. Common offenders include older JDBC drivers, certain Spring Security internal code paths, and legacy ORM libraries. Where possible, replace synchronized with java.util.concurrent.locks.ReentrantLock, which does not cause pinning.

// Pinning risk: synchronized

synchronized (lock) {

result = jdbcTemplate.queryForObject(sql, String.class);

}

// Better: ReentrantLock does not pin virtual threads

private final ReentrantLock lock = new ReentrantLock();

lock.lock();

try {

result = jdbcTemplate.queryForObject(sql, String.class);

} finally {

lock.unlock();

}JDBC and Connection Pool Considerations

Virtual threads change the economics of database connection pooling. With OS threads, a pool of 50 connections matches a thread pool of 50 threads cleanly. With virtual threads, you might have thousands of concurrent virtual threads but still need to limit active JDBC connections to protect the database. HikariCP works with virtual threads, but you must set maximumPoolSize deliberately — not based on thread count, but on what your database can handle. A typical production setting might be 20–100 connections regardless of how many virtual threads are running concurrently.

Structured Concurrency: Composing Virtual Thread Tasks

Java 21 also introduced Structured Concurrency (in preview), which provides a clean API for coordinating multiple virtual threads launched for a single task. It guarantees that child threads do not outlive their parent scope, making resource leaks and error propagation more predictable:

try (var scope = new StructuredTaskScope.ShutdownOnFailure()) {

Future<User> user = scope.fork(() -> userService.getUser(id));

Future<Orders> orders = scope.fork(() -> orderService.getOrders(id));

scope.join().throwIfFailed();

return new UserProfile(user.resultNow(), orders.resultNow());

}If either fork throws, the other is cancelled automatically. This pattern replaces verbose CompletableFuture chains with readable, sequential-looking code.

Performance Benchmarks: Real-World Numbers

In IO-bound benchmarks (simulating JDBC and HTTP calls with realistic latencies), services migrated to virtual threads on Java 21 consistently show 3–10x throughput improvements compared to the same code on fixed thread pools of 200–400 threads. The improvement is most dramatic when individual requests perform multiple sequential IO operations, because virtual thread yielding eliminates idle waiting time entirely.

CPU-bound workloads show no improvement — virtual threads do not increase the number of available CPU cores. For CPU-intensive work, the traditional fork-join pool or reactive schedulers remain appropriate.

Pros and Cons

Pros: Drastically simplifies high-concurrency code compared to reactive programming. No library changes needed for IO-bound work. First-class Spring Boot 3.2+ support. Excellent throughput for JDBC, HTTP, and file IO operations.

Cons: Pinning from synchronized blocks silently eliminates throughput gains in legacy codebases. Thread-local variables can cause unexpected memory retention at scale. Connection pool sizing requires deliberate adjustment. CPU-bound work does not benefit.

Common Mistakes

Assuming all blocking code benefits: Code inside synchronized blocks does not yield. Audit your critical paths with -Djdk.tracePinnedThreads=full before assuming full benefits.

Keeping oversized thread pools: After enabling virtual threads, the old maxThreads settings on Tomcat become irrelevant. Spring Boot's virtual thread mode handles request dispatch automatically. Leaving large explicit executor pools wastes resources.

Ignoring ThreadLocal leaks: At scale, virtual threads that carry expensive objects in ThreadLocals can cause memory pressure. Use ScopedValue (Java 21 preview) as a safer alternative for request-scoped context.

Key Takeaways

- Java 21 virtual threads offer reactive-level throughput for IO-bound workloads with synchronous code.

- Enable them in Spring Boot 3.2+ with a single property:

spring.threads.virtual.enabled=true. - Audit for synchronized pinning using

-Djdk.tracePinnedThreads=full. - Resize JDBC connection pools based on database capacity, not thread count.

- Structured Concurrency (Java 21 preview) further simplifies concurrent task composition.

Conclusion

Virtual threads are the most impactful Java concurrency improvement in a generation. For teams running IO-heavy Spring Boot services, migrating to Java 21 with spring.threads.virtual.enabled=true is one of the lowest-risk, highest-reward upgrades available. The migration is often a one-property change. The tradeoffs — pinning risks and connection pool retuning — are manageable with a day of profiling and testing. If you are still running Java 17 or earlier, Project Loom alone is a compelling reason to upgrade your production fleet to Java 21.

Leave a Comment

Software Engineer · Java · Spring Boot · Microservices