Java Multithreading & Concurrency Deep Dive: Virtual Threads, CompletableFuture, and Beyond

Concurrency is one of the hardest topics in Java engineering, and one of the most consequential. Java 21 Project Loom rewrote the rules with virtual threads — but understanding when and how to use them requires a deep grasp of the classic concurrency model. This guide covers both.

Table of Contents

- The Java Concurrency Model: What Every Engineer Must Know

- Java 21 Virtual Threads: Loom's Revolution

- CompletableFuture: Asynchronous Pipelines Without Reactive Complexity

- Structured Concurrency (Java 21 Preview)

- Thread Safety Patterns

- Common Concurrency Bugs and How to Avoid Them

- Real-World Problem: Thread Pool Exhaustion Under Bursty Load

- Solution Approach: Choosing the Right Concurrency Model

- Architecture: Bulkheads and Isolation

- Optimization: Benchmarking and Profiling Concurrency

- Conclusion

The Java Concurrency Model: What Every Engineer Must Know

Java threads map to OS threads by default. Each OS thread consumes significant memory (default stack size ~1MB), and OS thread context switches are expensive. This means a traditional Java server handling 10,000 concurrent blocking I/O requests needs 10,000 OS threads — a configuration that pushes most JVMs to their limits. Reactive programming (with WebFlux, Project Reactor) solved this by using a small thread pool and non-blocking I/O callbacks, but at the cost of dramatically increased code complexity: callback chains, flatMap gymnastics, and debugging nightmares.

Java 21 Virtual Threads: Loom's Revolution

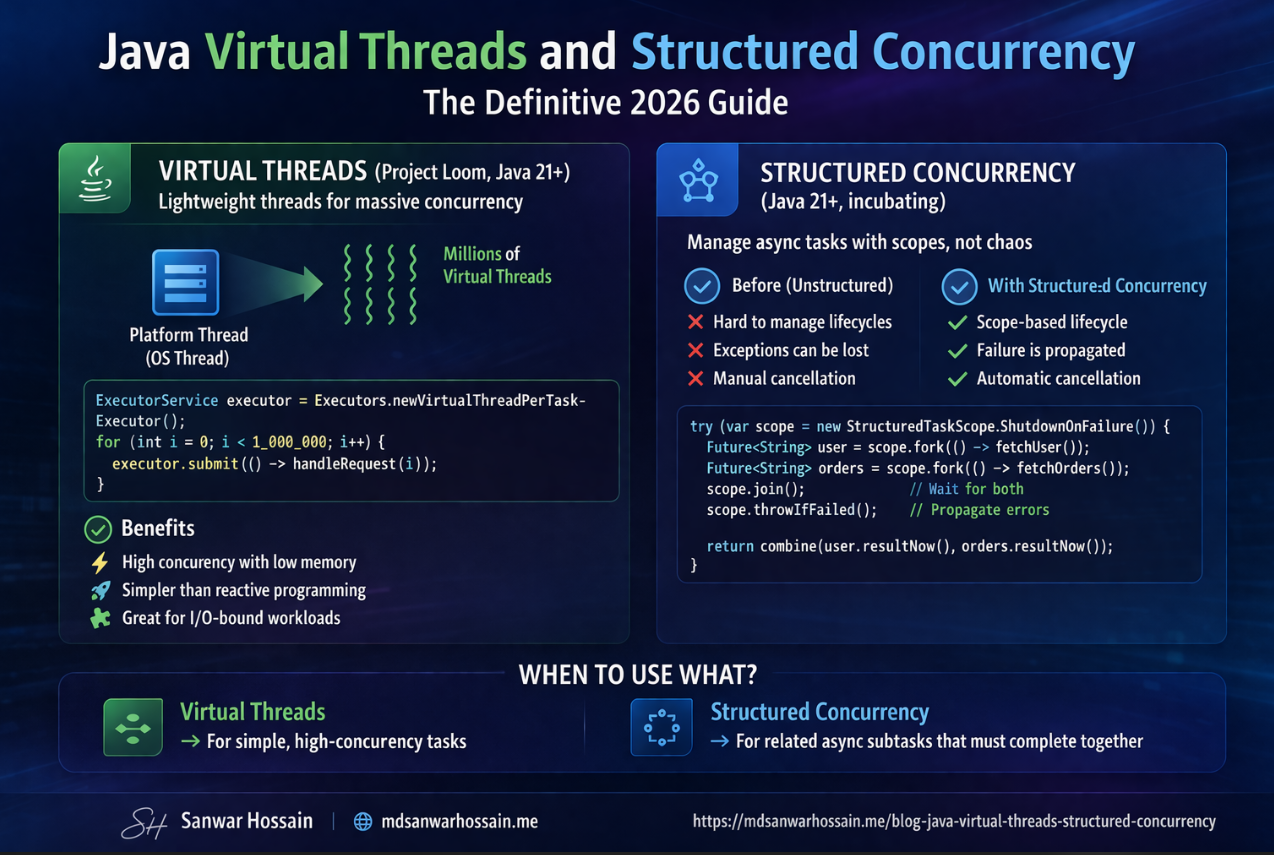

Project Loom, finalized as a standard feature in Java 21, introduces virtual threads — lightweight threads managed by the JVM rather than the OS. A virtual thread is mounted on a carrier OS thread during execution, but unmounted (parked) when it performs a blocking operation, freeing the carrier thread to run other virtual threads. This means you can now write simple blocking code and have the JVM automatically achieve reactive-like scalability.

Creating and Using Virtual Threads

// Create a virtual thread directly

Thread vt = Thread.ofVirtual().start(() -> {

// This blocking call unmounts from carrier thread automatically

String result = httpClient.get("https://api.example.com/data");

System.out.println(result);

});

// Use virtual thread executor — ideal for server workloads

ExecutorService executor = Executors.newVirtualThreadPerTaskExecutor();

List<Future<String>> futures = IntStream.range(0, 10_000)

.mapToObj(i -> executor.submit(() -> fetchUserData(i)))

.toList();

// All 10,000 tasks run on virtual threads — JVM scales automatically

futures.forEach(f -> {

try { System.out.println(f.get()); }

catch (Exception e) { e.printStackTrace(); }

});

executor.shutdown();

Spring Boot and Virtual Threads

Spring Boot 3.2+ supports virtual threads as a first-class configuration. Setting spring.threads.virtual.enabled=true in your application properties switches the entire Tomcat request handler pool to virtual threads. This single configuration change can dramatically improve throughput for I/O-bound Spring Boot services without any code changes.

# application.yml — enable virtual threads globally

spring:

threads:

virtual:

enabled: true

Important caveat: Do not use virtual threads with synchronized blocks that hold locks during I/O operations. Synchronized blocks pin the virtual thread to its carrier thread, negating the scalability benefit. Use ReentrantLock instead of synchronized in code that performs I/O while holding locks.

CompletableFuture: Asynchronous Pipelines Without Reactive Complexity

CompletableFuture, introduced in Java 8, enables composing asynchronous operations in a readable pipeline without the full complexity of reactive programming. It is the right tool when you need asynchronous composition but do not need backpressure or streaming semantics.

// Parallel calls to three microservices with timeout and error handling

public UserDashboard fetchDashboard(String userId) {

CompletableFuture<UserProfile> profileFuture =

CompletableFuture.supplyAsync(() -> userService.getProfile(userId))

.orTimeout(2, TimeUnit.SECONDS);

CompletableFuture<List<Order>> ordersFuture =

CompletableFuture.supplyAsync(() -> orderService.getRecentOrders(userId))

.orTimeout(2, TimeUnit.SECONDS);

CompletableFuture<List<Notification>> notificationsFuture =

CompletableFuture.supplyAsync(() -> notificationService.getUnread(userId))

.orTimeout(2, TimeUnit.SECONDS)

.exceptionally(ex -> Collections.emptyList()); // graceful degradation

return CompletableFuture.allOf(profileFuture, ordersFuture, notificationsFuture)

.thenApply(ignored -> new UserDashboard(

profileFuture.join(),

ordersFuture.join(),

notificationsFuture.join()

))

.join();

}

Structured Concurrency (Java 21 Preview)

Structured concurrency treats a group of related concurrent tasks as a single unit of work. When any task fails, all sibling tasks are cancelled. When the scope ends, all tasks are guaranteed to have completed or been cancelled. This makes concurrent code as predictable and readable as sequential code, eliminating the resource leak and error propagation problems common with unstructured concurrent code.

// Structured concurrency — all subtasks cancel if any fails

public UserDashboard fetchDashboardStructured(String userId)

throws InterruptedException, ExecutionException {

try (var scope = new StructuredTaskScope.ShutdownOnFailure()) {

Subtask<UserProfile> profile = scope.fork(() -> userService.getProfile(userId));

Subtask<List<Order>> orders = scope.fork(() -> orderService.getRecentOrders(userId));

Subtask<List<Notification>> notifs = scope.fork(() -> notificationService.getUnread(userId));

scope.join(); // wait for all subtasks

scope.throwIfFailed(); // rethrow first failure as CompletionException

return new UserDashboard(profile.get(), orders.get(), notifs.get());

}

}

Thread Safety Patterns

Immutability

Immutable objects are inherently thread-safe — they can be shared across threads without synchronization. Use Java records (Java 16+) for value objects and DTOs. Mark fields final where possible. For mutable shared state, prefer thread-safe data structures from java.util.concurrent.

Atomic Variables

For simple numeric counters and flags shared across threads, AtomicInteger, AtomicLong, and AtomicReference provide lock-free compare-and-swap operations that are significantly faster than synchronized blocks for high-contention counters.

// Thread-safe counter without synchronization overhead

private final AtomicLong requestCount = new AtomicLong(0);

private final AtomicLong errorCount = new AtomicLong(0);

public void recordRequest(boolean success) {

requestCount.incrementAndGet();

if (!success) errorCount.incrementAndGet();

}

public double errorRate() {

long total = requestCount.get();

return total == 0 ? 0.0 : (double) errorCount.get() / total;

}

ConcurrentHashMap and Thread-Safe Collections

ConcurrentHashMap is the workhorse of concurrent Java programming. Unlike the synchronized Hashtable, it uses segment-level locking (or lock-free algorithms in Java 8+) to allow concurrent reads and writes with high throughput. Use computeIfAbsent for atomic get-or-create operations, which is a common pattern for caches and registries.

Common Concurrency Bugs and How to Avoid Them

Race conditions: Multiple threads read and write shared state without synchronization, producing inconsistent results. Prevent by using atomic variables, concurrent collections, or explicit locks.

Deadlocks: Thread A holds lock L1 and waits for L2; Thread B holds L2 and waits for L1. Prevent by acquiring locks in a consistent global order, using tryLock with timeouts, or avoiding nested locking.

Memory visibility: A write by Thread A is not guaranteed to be visible to Thread B without synchronization. Use volatile for flags, explicit synchronization for compound operations, or rely on the happens-before guarantees of concurrent collections and CompletableFuture.

Thread pool exhaustion: Submitting blocking tasks to a fixed-size thread pool and waiting for results in the same pool creates deadlock. With virtual threads, this problem largely disappears, but it remains relevant for platform thread pools.

"Virtual threads do not eliminate the need to understand Java's concurrency model — they raise the floor so that blocking I/O no longer kills scalability. But data races, deadlocks, and visibility bugs remain just as dangerous."

Key Takeaways

- Virtual threads (Java 21) dramatically increase I/O throughput with simple blocking code — enable them in Spring Boot with a single property.

- Avoid

synchronizedblocks that hold locks during I/O — they pin virtual threads. UseReentrantLockinstead. CompletableFutureis the right tool for composable async pipelines without reactive programming complexity.- Structured concurrency (Java 21 preview) makes concurrent sub-task management as safe and readable as sequential code.

- Classic concurrency bugs — race conditions, deadlocks, memory visibility — remain just as dangerous in the virtual thread era.

Real-World Problem: Thread Pool Exhaustion Under Bursty Load

A logistics platform running Spring MVC with a Tomcat thread pool of 200 threads served normally at 1,500 requests/second with 80ms average response time. During a promotional event, traffic spiked to 4,000 requests/second. Each request made two downstream HTTP calls to shipping rate APIs that averaged 120ms under normal conditions but degraded to 800ms under the event load. Within 30 seconds, all 200 Tomcat threads were blocked waiting for slow downstream responses. New requests queued up, queue capacity was exceeded, and the service returned 503 errors for the entire 12-minute peak window — affecting tens of thousands of users.

The post-incident investigation revealed that the team had never load tested at more than 2× normal traffic. The fix involved three changes: enabling virtual threads (eliminating the fixed pool constraint), adding a 500ms timeout with fallback caching on downstream shipping rate calls, and implementing bulkheads to prevent shipping rate API slowness from affecting other request types. After these changes, the same traffic spike was absorbed with p99 latency of 210ms and zero 503 errors.

Solution Approach: Choosing the Right Concurrency Model

The right concurrency primitive depends on the workload shape. For I/O-bound server workloads handling independent requests, virtual threads with Spring Boot 3.2+ are the correct choice — they eliminate the fixed-pool bottleneck with zero code changes. For parallel aggregation of independent service calls within a single request, CompletableFuture pipelines provide readable async composition with explicit error handling per call. For workflows where subtasks must be treated as a unit (any failure cancels siblings), StructuredTaskScope provides the safety guarantees that CompletableFuture lacks. For CPU-bound parallel processing of data collections, the Fork/Join pool via parallel streams remains the appropriate tool.

Mixing these models is where teams get into trouble. The most common error is submitting blocking tasks to the common ForkJoinPool (used by parallel streams) which starves CPU-bound parallel work. Keep I/O and CPU work on separate executors, and always measure before assuming a concurrency change improves performance.

Architecture: Bulkheads and Isolation

Production concurrency architecture is about isolation as much as throughput. The bulkhead pattern assigns separate thread pools (or virtual thread executors) to different types of work, so that a slow downstream dependency does not consume resources needed by fast local operations. A typical pattern for a microservice with multiple downstream dependencies:

// Separate executors isolate slow external calls from fast local work

ExecutorService shippingRateExecutor =

Executors.newVirtualThreadPerTaskExecutor(); // unbounded virtual threads

ExecutorService inventoryExecutor =

Executors.newVirtualThreadPerTaskExecutor();

// Fast local computation uses common pool or a separate bounded executor

ExecutorService pricingExecutor =

Executors.newFixedThreadPool(

Runtime.getRuntime().availableProcessors());

// Bulkhead: shipping slowness cannot affect inventory or pricing

CompletableFuture<ShippingRate> rate = CompletableFuture

.supplyAsync(() -> shippingApi.getRate(order), shippingRateExecutor)

.orTimeout(500, TimeUnit.MILLISECONDS)

.exceptionally(ex -> ShippingRate.cached(order.getDestination()));

Optimization: Benchmarking and Profiling Concurrency

Concurrency optimizations must be measured, not assumed. JMH (Java Microbenchmark Harness) provides reliable microbenchmarks for comparing lock implementations, atomic operations, and collection access patterns. For system-level throughput measurement, JFR's thread activity view shows carrier thread utilization, lock contention hot spots, and park/unpark frequency that reveals whether virtual threads are being pinned.

When debugging high contention, jstack or JFR's lock profile reveals which locks are held most frequently and by which threads. A ConcurrentHashMap with computeIfAbsent under very high concurrency can still exhibit significant contention if all callers hash to the same bucket segment. In these cases, striped locking (Guava's Striped) or a dedicated cache library like Caffeine — which uses clock-based eviction with near-zero contention — is a better choice.

Conclusion

Java concurrency in 2026 is both simpler and more nuanced than it was five years ago. Virtual threads dramatically lower the barrier to scalable I/O-bound services, but they do not remove the need to understand synchronization, visibility, and deadlock. The engineers who will build the most reliable systems are those who understand the full concurrency model — from the Java Memory Model's happens-before rules to the practical mechanics of virtual thread pinning — and choose the right abstraction for each problem rather than defaulting to a single tool for everything. Invest in this understanding; it is one of the highest-leverage skills in backend Java engineering.

Leave a Comment

Related Posts

Software Engineer · Java · Spring Boot · Microservices